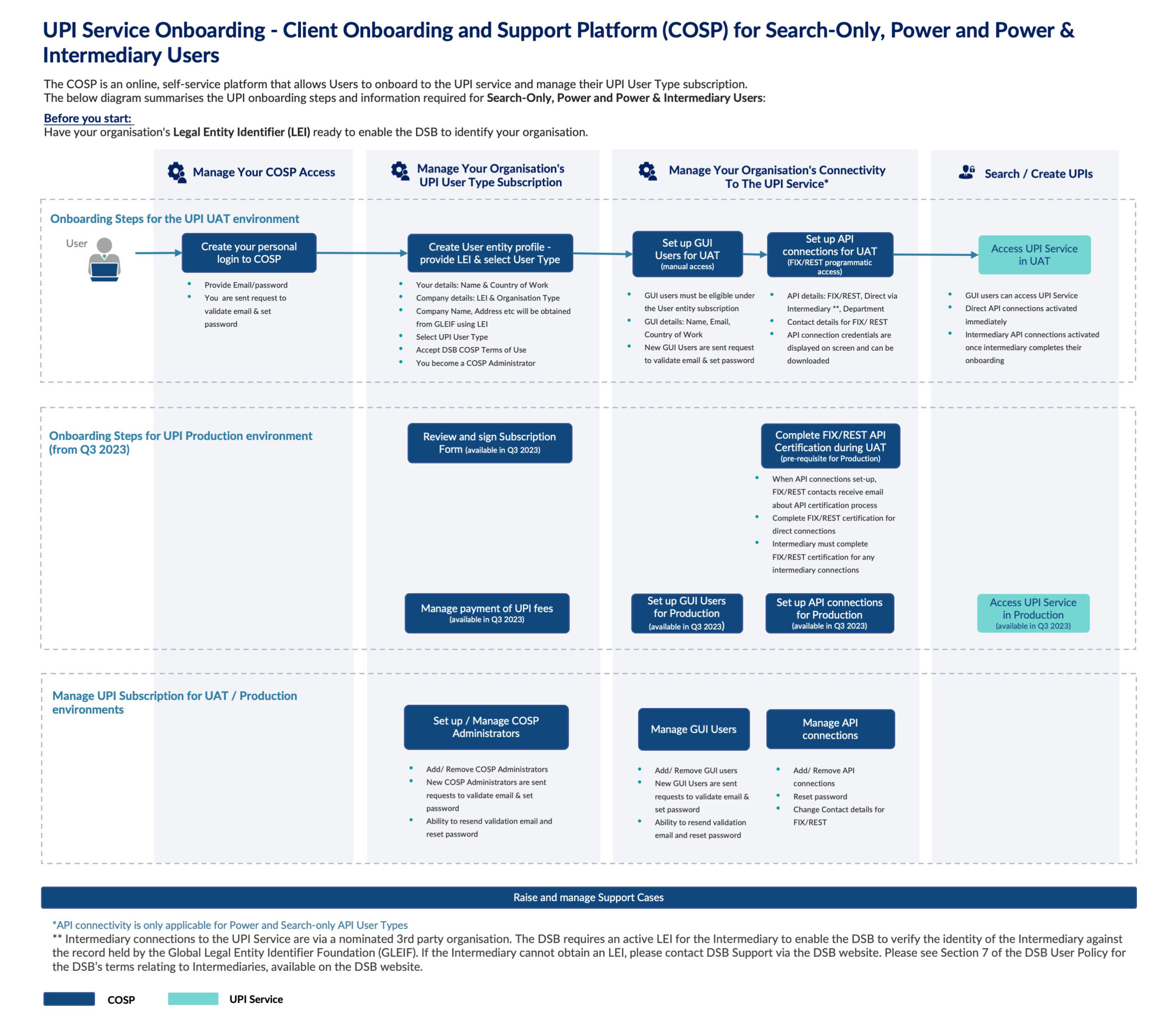

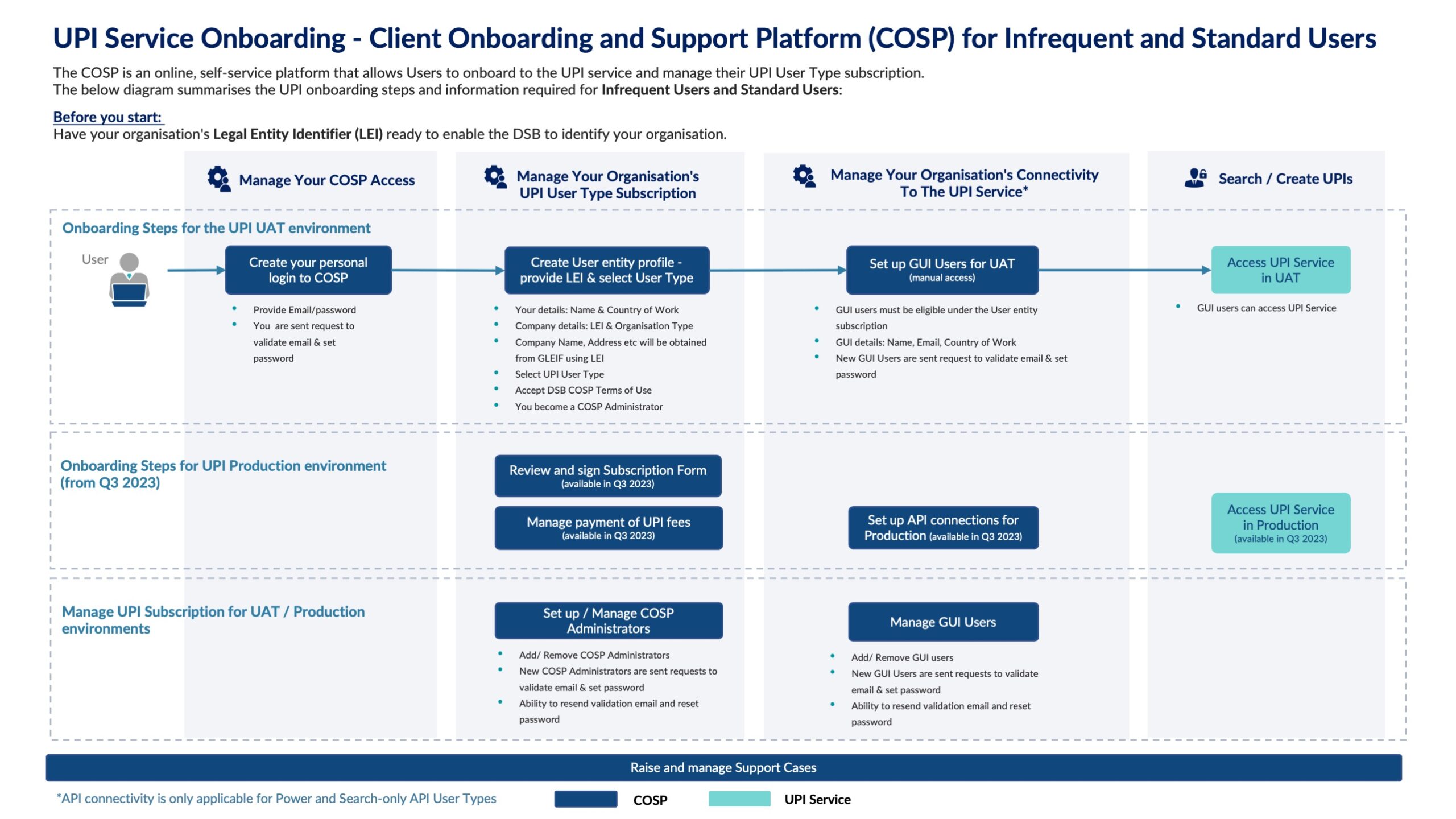

A month ago, I described the DSB’s preparations for what turned out to be a very successful industry load test on 6th – 7th April, the results of which we published last week. Based on that success, we promoted the new high performance code base into production on 22 April.

In this blog, I will examine the performance of the system in Production, before and after the upgrade, to understand the impact of the change to the system.

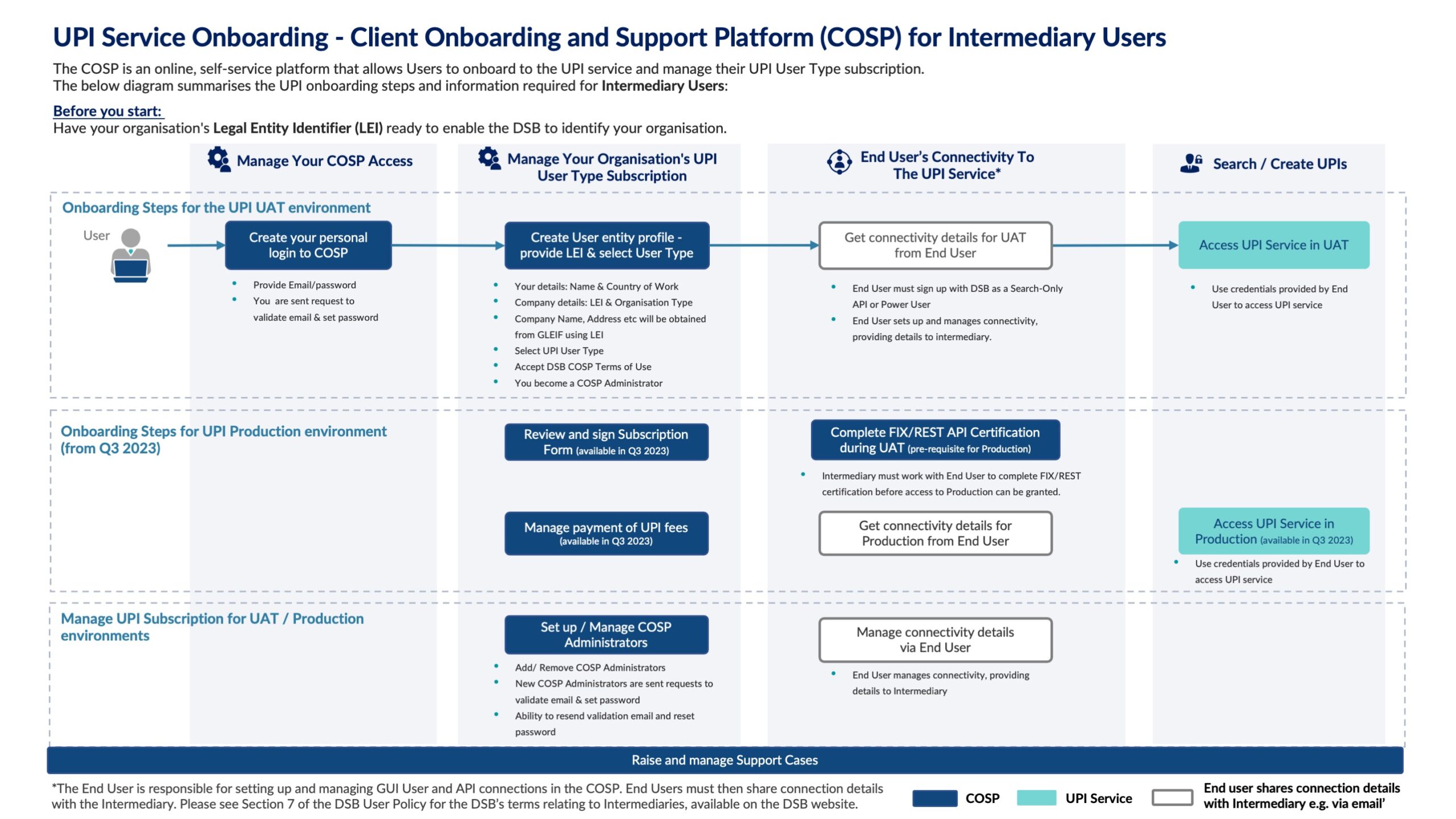

Java Garbage Collection Pause Times

Regular followers of the DSB will know that there were three outages of a total of 132 minutes across the first 6 months of the DSB’s operation (incidentally, all this data and more is available on the DSB’s operational status page). The root cause analysis of each was Garbage Collection (GC) inthe DSB’s open-source SOLR component as described at the bottom of the operational status page.

So let’s see how much improvement has occurred as a result of the codebase upgrade.

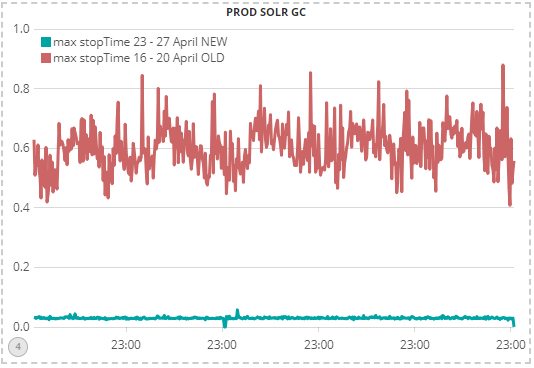

This chart shows that the production environment experienced a dramatic 10x improvement in GC pause times as a result of the new version, from approx 0.6 seconds down to approx 0.06 seconds over the course of a week. Additionally, GC ‘jitter’ has also substantially reduced, providing greater consistency of performance to user queries.

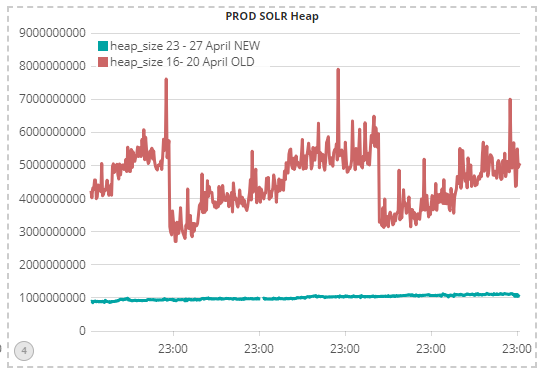

So why are GC pause times so much better than before? Java performance tuning experts will know that the pauses are due to time needed for memory clean-up as a result of object creation. i.e. the speed with which the Java memory ‘heap’ grows is a major contributory factor to the GC pause time. This chart shows an equally dramatic 5x efficiency gain in memory heap growth from the new codebase:

Incidently, the shape of the memory growth in the above chart is typical of all Java applications: the memory ‘heap’ grows until garbage collection kicks in and frees up some old ‘dead’ objects (aka ‘garbage’) thereby reducing the heap size. Whilst it looks as if the new codebase does not have the same memory profile, in actual fact it does, and it can just about be discerned in the above chart, showing an ever so slight increase in heap size throughout the week.

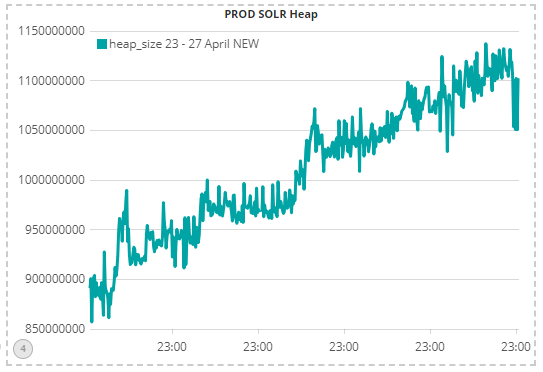

The below chart shows this in more detail (it is just a scaled up version of the above chart, focusing on the new codebase only):

Impact on Latency

So what has been the impact of this change on the processing time of user messages (aka latency)? The table below shows the latency experience for FIX API users over two weeks, covering both the periods before the upgrade and after. It shows a 5x-10x improvement in performance, and critically, brings the DSB back within its performance SLA of processing 99% of all user messages within 1,000ms:

| Date per day | 50th percentile of latency (ms) | 90th percentile of latency (ms) | 99th percentile of latency (ms) |

| April 16th 2018 | 325 | 1,108 | 2,226 |

| April 17th 2018 | 352 | 1,150 | 2,293 |

| April 18th 2018 | 354 | 1,223 | 2,413 |

| April 19th 2018 | 354 | 1,225 | 2,392 |

| April 20th 2018 | 335 | 1,149 | 2,259 |

| NEW CODEBASE | |||

| April 23rd 2018 | 46 | 147 | 240 |

| April 24th 2018 | 44 | 146 | 214 |

| April 25th 2018 | 38 | 138 | 207 |

| April 26th 2018 | 47 | 146 | 209 |

| April 27th 2018 | 115 | 137 | 198 |

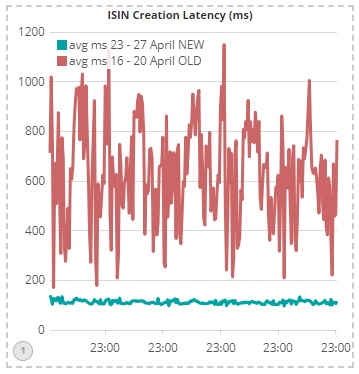

The intra-day chart below shows the same 5x-10x improvement in latency within the ISIN Engine core as well as the major reduction in latency jitter:

A technical note for the sharp-eyed amongst you: the above chart is the latency within the core and not end-to-end latency. It is also average latency not median or 99 percentile.

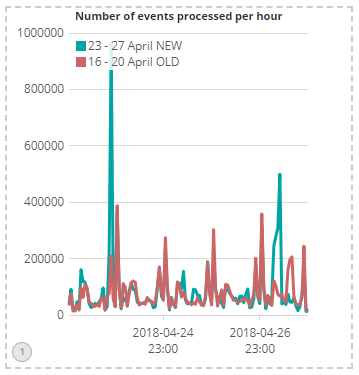

Finally, we should also examine the volume of events being processed across the two weeks, just in case the better performance was partially or fully due to lower volumes. This chart shows that the opposite was the case: last week had higher peaks than the week before, with the system at peak handling a million events per hour without breaking into a sweat (note that each user message translates into multiple events):

Next Steps

Firstly, if you or your firm find this kind of technology-related information useful and you wish to provide input into the DSB’s technology strategy, then you have the opportunity to participate in the DSB Technology Advisory Committee by emailing the secretariat@anna-dsb.com. This opportunity will close once the committee is formed in May, so please do contact us soon.

With the technology platform of the DSB now in a state of high-performance tune, our focus will move to product and usability enhancements as well as process improvements to continue to increase our transparency and engagement with industry.

Sassan Danesh, Management Team, DSB.